CogDriver: Integrating Cognitive Inertia for Temporally Coherent Planning in Autonomous Driving

Abstract

The pursuit of autonomous agents capable of temporally coherent planning is hindered by a fundamental flaw in current vision-language models (VLMs): they lack cognitive inertia. Operating on isolated snapshots, these models cannot form a continuous understanding of the environment, leading to erratic decision jitter and a failure to execute complex, multi-step maneuvers. To remedy this, we introduce CogDriver, a framework designed to build a stable internal representation by instilling this crucial cognitive property. Our work makes two key contributions: (1) We present CogDriver-Data, a large-scale vision-language-action dataset whose narrative annotations provide the supervisory signal for learning temporal dynamics and persistent intent. (2) We develop the CogDriver-Agent, an architecture featuring a sparse temporal memory to maintain a stable internal state. This is enabled by a spatiotemporal knowledge distillation approach that explicitly teaches decision coherence. Comprehensive experiments validate our paradigm: CogDriver-Agent achieves a 22% increase in the closed-loop Driving Score on Bench2Drive and a 21% reduction in mean L2 error on nuScenes, establishing a new state-of-the-art. These significant gains in both long-term decision-making and imitation accuracy provide strong evidence that our agent successfully maintains a temporally coherent internal state, bridging the gap toward more reliable autonomous driving.

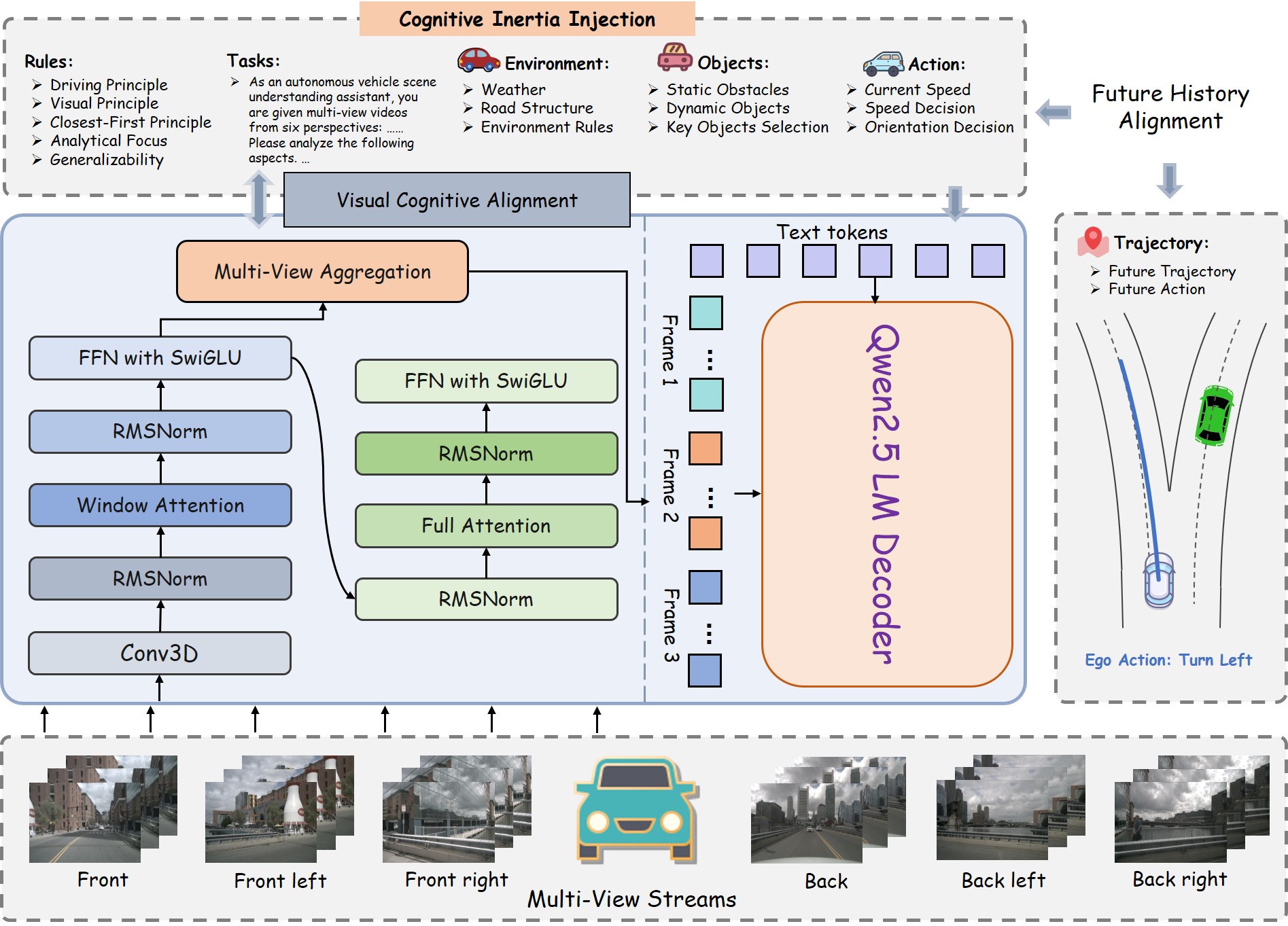

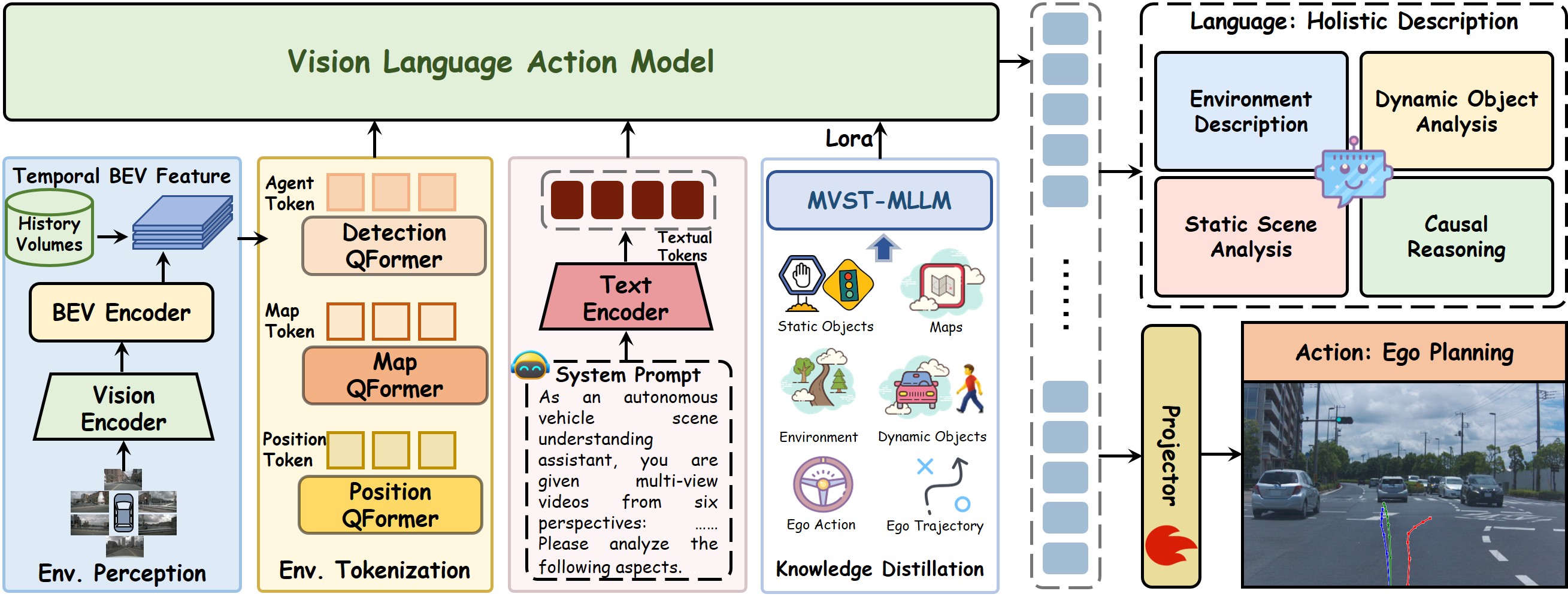

An overview of our CogDriver-Agent. It moves beyond reactive decision-making by harnessing a pre-trained language model to maintain cognitive inertia. It achieves this by building a stable internal world model that continuously integrates 3D perception, ego states, and language commands. This allows the model to generate not just context-aware, but temporally coherent plans. The model's effectiveness is demonstrated by its state-of-the-art performance across both open-loop trajectory planning and complex closed-loop driving tasks. Its success highlights a unique capability: bridging perception with action through a persistent, evolving strategy, rather than disconnected, stimulus-response mappings.

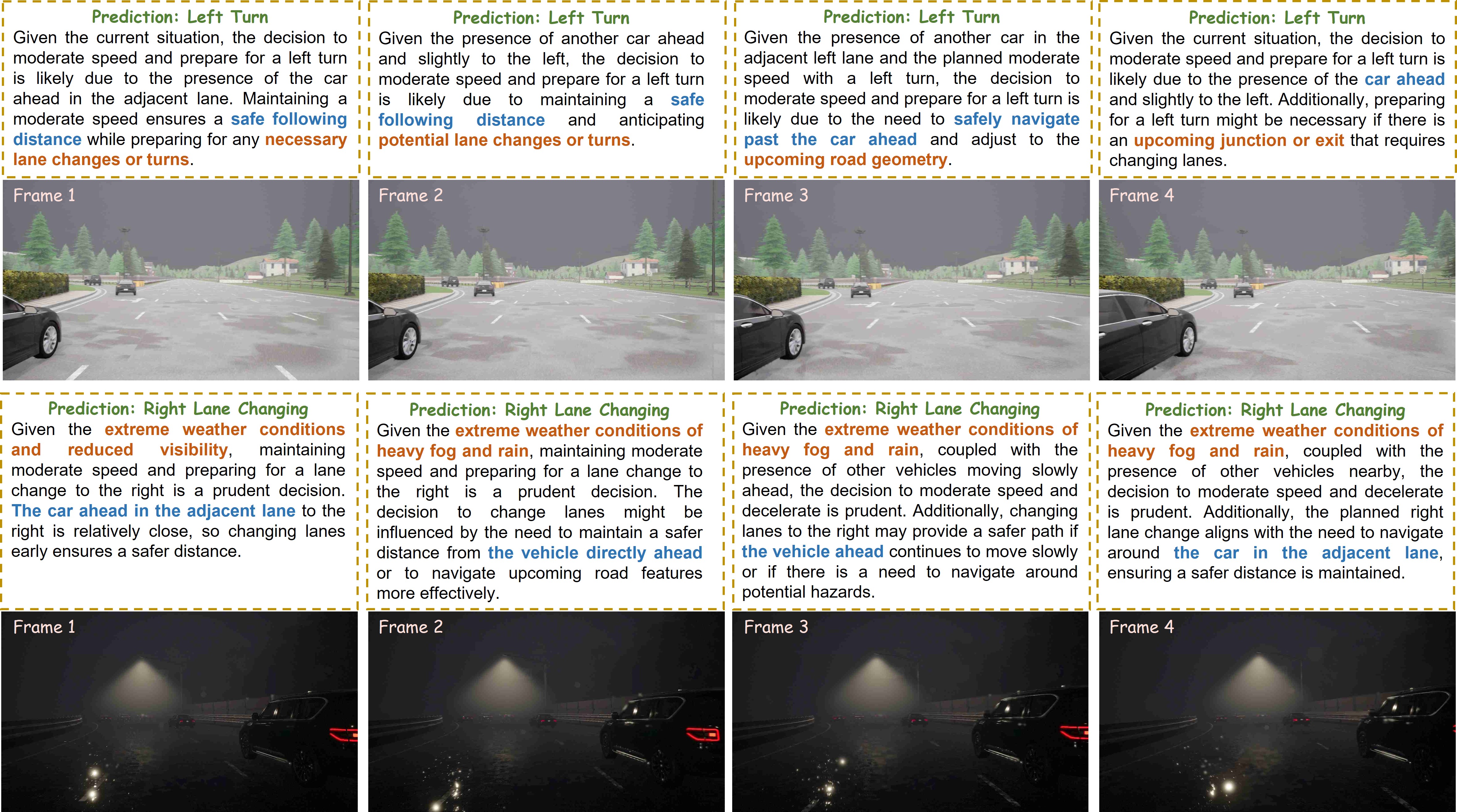

Visualization of Temporally Coherent Reasoning by CogDriver-Agent. We present two challenging driving scenarios: a left turn in clear conditions (top) and a right lane change in adverse weather (bottom). For each, we visualize the agent's frame-by-frame narrative predictions. The agent demonstrates cognitive inertia by maintaining a consistent high-level plan (e.g., 'Left Turn'). Crucially, the underlying rationale is not static; it evolves as the scene unfolds, maturing from reacting to a 'car ahead' (Frame 1) to anticipating an 'upcoming junction' (Frame 4), proving its capacity for sophisticated, long-term reasoning.

Poster

BibTeX

@article{liu2025CogDrive,

title={CogDriver: Integrating Cognitive Inertia for Temporally Coherent Planning in Autonomous Driving},

author={Pei Liu and Qingtian Ning and Xinyan Lu and Haipeng Liu and Weiliang Ma and Dangen She and Xianpeng Lang and Jun Ma}

}